Teradata vs. Snowflake: Architecture & Feature Comparison

Both Teradata and Snowflake differ in their architecture. Understanding their differences is important for bringing in the success of the Teradata to Snowflake migration.

Teradata's Architecture

Teradata is a shared-nothing architecture with On-premises MPP (Massively Parallel Processing). In this model, every component of the storage is dedicated to a specific unit. The key characteristics of Teradata’s architecture are

- Massively Parallel Processing: Queries are split and executed across multiple nodes simultaneously.

- Tightly coupled compute and storage.

- AMP-based hashing for data distribution: Responsible for data storage and retrieval.

- High licensing and maintenance costs

- Designed for predictable, structured workloads.

Snowflake's Architecture

Snowflake was mainly built for a cloud-native, multi-cluster shared data architecture that was designed for modern data workloads. The key characteristics of Snowflake’s architecture are

- Compute and Storage separation: Snowflake decouples compute from storage. This allows running a query in seconds and shutting it down immediately after.

- Cloud services layer: It is called the “brain” of Snowflake. It handles authentication, metadata management, query optimization, and security. This offers infinite scalability and high availability.

- On-Demand Elasticity: Compute resources can scale up or down instantly based on workload demands, supporting continuous workloads efficiently.

Side-by-Side Comparison Table

| S.no |

Feature |

Teradata |

Snowflake |

| 1 |

Architecture |

On-prem tightly coupled |

Cloud-native, multi-cluster shared data |

| 2 |

Scaling |

Hardware-based, manual |

Elastic, automatic |

| 3 |

Compute Storage |

Tightly coupled |

Decoupled fully |

| 4 |

Performance |

MPP with fixed resources |

MPP with dynamic resource allocation |

| 5 |

Cost Model |

Fixed licensing + Hardware |

Pay-per-use |

| 6 |

Data Sharing |

Complex and limited |

Built-in, secure data sharing |

| 7 |

Maintenance |

High DBA overhead |

Fully managed, zero patching |

Teradata to Snowflake Data Type Mapping

Migrating from Teradata to Snowflake requires careful planning for data type mapping. Teradata does not have a direct equivalent in Snowflake.

Numeric Data Types

Teradata is often more granular with its numeric definitions, whereas Snowflake tends to generalize these into the NUMBER type.

| Teradata Data Type |

Snowflake Data Type |

Migration note |

| BYTEINT |

NUMBER(3,0) |

Snowflake does not have BYTEINT |

| SMALLINT |

NUMBER(5,0) |

Standard integer |

| INTEGER/INT |

NUMBER(10,0) |

Standard 4-byte integer |

| BIGINT |

NUMBER(19,0) |

Standard 8-byte integer |

| FLOAT/REAL |

FLOAT |

Both are IEEE 754 double |

| DECIMAL |

NUMBER (p,s) |

Precision and scale map directly |

Character Data types

Teradata often defaults to bytes while Snowflake defaults to characters (UTF-8).

| Teradata Data Type |

Snowflake Data Type |

Migration note |

| CHAR(n) |

VARCHAR(n) |

Snowflake treats CHAR as VARCHAR |

| VARCHAR(n) |

VARCHAR(n) |

Snowlake supports up to 16 MB per string |

| LONG VARCHAR |

VARCHAR |

No need to specify length, Snowflake handles the ceiling |

| CLOB |

VARCHAR |

Snowflake handles large text efficiently |

Date and Time Data Types

Snowflake is flexible with timestamps, but Teradata’s unique PERIOD type requires transformation logic.

| Teradata Data Type |

Snowflake Data Type |

Migration Note |

| DATE |

DATE |

Standard YYYY-MM-DD |

| TIME |

TIME |

Standard HH:MM:SS |

| TIMESTAMP |

TIMESTAMP_NTZ |

No time zone |

| PERIOD (DATE) |

OBJECT or two DATE Cols |

Snowflake has no Period type. Map to Start_Date and End_Date. |

Binary and Complex Data Types

Teradata uses BYTE types for raw data, whereas Snowflake uses BINARY or the powerful VARIANT type for semi-structured data.

| Teradata Data Type |

Snowflake Data Type |

Migration note |

| BYTE(n) |

BINARY(n) |

Used for Fixed-length binary |

| VARBYTE(n) |

BINARY |

Used for variable-length binary |

| BLOB |

BINARY |

Standard large binary object |

| JSON |

VARIANT |

Snowflake's VARIANT is highly optimized for JSON. |

Teradata SQL to Snowflake SQL: Key Conversion Differences

Moving from Teradata SQL to Snowflake SQL is generally a relief for developers because Snowflake follows the standard ANSI SQL approach.

Common SQL Conversion Examples

Top-Level Query Syntax

Teradata often puts qualifiers at the start of the SELECT statement, whereas Snowflake follows the standard LIMIT or TOP clause logic.

Date and Time Functions

Teradata allows mathematical operations directly on dates, while Snowflake prefers explicit functions.

String Functions and Concatenation

String operations are handled differently, especially concatenation, trim(), and SUBSTR. Snowflake supports, but also provides more consistent functions.

How to Migrate from Teradata to Snowflake: 8-Step Process

Migrating from Teradata to Snowflake is a structured journey that combines assessment, transformation, and validation. To successfully move from Teradata to Snowflake, you need careful planning and precise execution. A structured method will keep disruptions to a minimum. It also helps confirm data correctness and performance in the new system.

Step 1: Audit Your Teradata Environment (1–2 weeks)

Before starting with migration, make an assessment of your existing Teradata ecosystem.

- List Your Data Assets: Identify all active databases, schemas, tables, views, and users. Document Teradata-specific items like macros, stored procedures, UDFs (User Defined Functions), and BTEQ scripts. Note any custom data types and indexing methods.

- Examine Workloads and Connections: Profile your Teradata workloads to understand query patterns and resource use. Find connections between data objects, data jobs, and the reports or tools that use them. Understanding these relationships is essential for choosing what to move first and keeping the business running.

- Define the Project's Scope and Goals: Clearly state what you want to achieve. Are you migrating all Teradata databases, or just starting with a smaller test project? Which Teradata features need to be copied? Which ones can be updated using Snowflake's newer capabilities? Set clear success targets for performance, cost, and features.

- Understand Data Structures and Code: Know that Teradata and Snowflake have different SQL styles and data types. Plan how Teradata's data definitions and commands will be translated to Snowflake. Think about how Teradata-specific SQL and scripts will be updated or rewritten. This often needs special tools or knowledge.

Step 2: Choose Your Migration Strategy

Decide on the migration strategy based on business needs and risk tolerance. Based on complexity and timeline, choose any of the three strategies below.

| Strategy |

Description |

Best For |

Effort needed |

| Lift and Shift |

Move everything at once |

Fast timelines, low PL/SQL complexity |

Low |

| Re-architecture (Phased) |

Migrate workloads incrementally |

Long-term performance, full modernization |

High |

| Hybrid |

Lift and shift first, modernize in phases |

Balance speed with optimization |

Medium |

Step 3: Set Up Snowflake Environment (1 week)

Before the full move, prepare Snowflake and test the process completely.

- Configure Snowflake: Set up your Snowflake account, virtual warehouses, databases, schemas, users, and roles as you designed them. Set up network rules, security connections, and resource monitors.

- Data Cleanup and Tuning (Optional): You can choose to clean or archive old data in Teradata before migrating it. This can make the move smaller and improve data quality in Snowflake. It is also a chance to adjust data models for Snowflake.

- RBAC (Role-based Access Control): Define roles (ACCOUNTADMIN, SYSADMIN, ANALYST). Create a separate compute cluster for Loading, Transformation, and Analytics to prevent resource contention.

- Set up IP whitelisting and Private Link for secure connectivity.

Step 4: Convert Schema and DDL (2–4 weeks)

Convert data types using the mapping table above. Translate Teradata schemas and DDL into Snowflake-compatible formats. Map datatype and constraints. Teradata PRIMARY INDEX (PI) and PARTITION BY have no direct equivalent — redesign as Snowflake clustering keys. Convert table, definitions, views, and indexes. Use SnowConvert or AWS SCT for automated partial translation.

Step 5: Migrate Historical Data (timeline varies by volume)

Move existing data from Teradata to Snowflake efficiently. Export from Teradata using TPT (Teradata Parallel Transporter) or JDBC. Stage data files to S3/Azure Blob. Load into Snowflake using bulk loading (COPY INTO). Do a validation for row counts and data consistency.

Step 6: Convert ETL Pipelines and BTEQ Scripts (2–6 weeks)

This is the time-consuming step. Replace BTEQ control logic with Snowflake Scripting or Python-based orchestration. Update ETL/ELT workflows. Replace Teradata-specific SQL logic. Optimize transformations for Snowflake performance.

Step 7: Validate and Test (1–2 weeks)

Ensure the data in Snowflake matches the source and performance meets expectations. Run row-count checks and checksums between Teradata and Snowflake. Regression testing for downstream applications.

Step 8: Cutover, Go-Live & Decommission

The move is not finished after the data is transferred. Several steps afterward are very important for long-term success.

- User Training: Give good training to all users (analysts, data scientists, developers) on Snowflake's system, its SQL style, and best practices for writing queries and managing costs.

- Update Documents and Processes: Update all process documents, data dictionaries, and system diagrams to show the new Snowflake-based setup and any changed workflows.

- Monitor and Improve: Constantly watch Snowflake's performance and credit use. Get regular user feedback. Find areas for more improvement in queries, warehouse settings, or data processes. Put cost controls and alerts in place.

- Turn off Teradata: Once you are sure that Snowflake is stable, working fully, and all data and applications have been moved and checked, plan to turn off the Teradata system. Make sure all data is backed up according to your company's rules.

- Ongoing Support and Governance: Set up a support system for users to ask questions. Put data governance rules in place for the new Snowflake system.

Migration Timeline Estimates:

| Teradata Size |

Script Complexity |

Estimated Duration |

| <1 TB |

Low |

6 - 10 weeks |

| 1TB - 10TB |

Medium |

3-5 months |

| 10 TB - 100 TB |

High |

5 - 9 months |

| 100 TB+ |

Very High |

9 - 18 months |

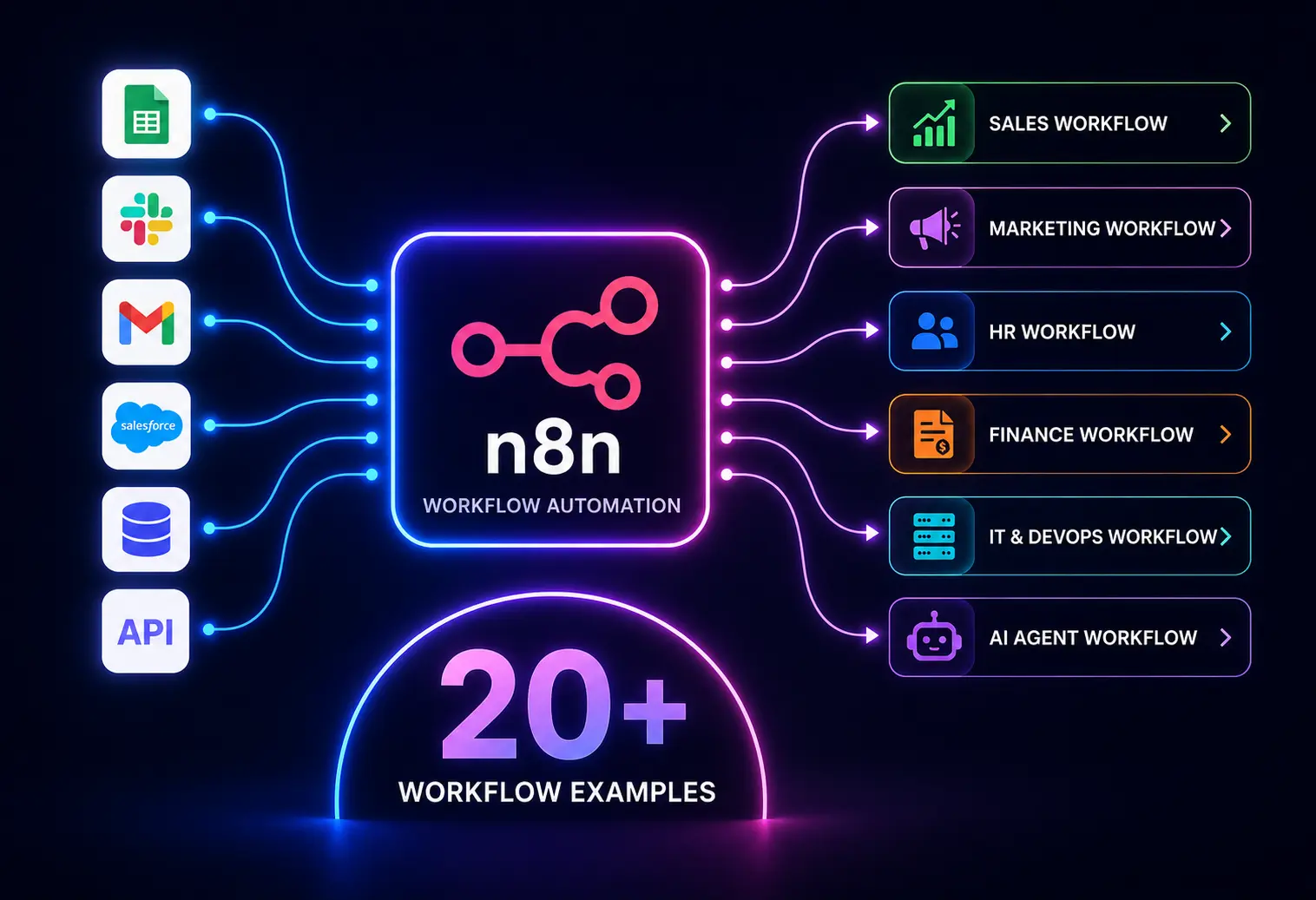

Teradata to Snowflake Migration Tools

Below is the list of some of the commonly used Teradata to Snowflake migration tools.

1. SnowConvert AI (by Snowflake)

SnowConvert is Snowflake’s dedicated tool for automated code conversion. It converts Teradata SQL, DDL, and scripts to Snowflake-compatible syntax.

Best for:

Large volumes of legacy code that have SQL-heavy migrations.

2. Snowpark Migration Accelerator

It is specifically designed for those moving legacy logic into Snowflake’s Snowpark environment.

Best for:

Modernizing heavy compute logic.

3. AWS Schema Conversion Tool (SCT)

AWS SCT helps convert database schemas and code while integrating with AWS migration services.

Best for:

Organizations that already use AWS infrastructure.

4. Data Movement and ETL Tools

The above tools move logic, and these tools move the actual data.

- Fivetran/HVR: The gold standard for high-volume data replication and Change Data Capture (CDC).

- Matillion: Built specifically for the cloud, it is excellent for transforming data after it lands in Snowflake.

- Teradata Parallel Transporter (TPT): It is used in the initial extraction phase to land data into S3/Azure Blob before Snowflake picks.

Tool Comparison Table

How to Choose the Right Tool

Choosing the right migration tool depends on team size and migration complexity.

- Assess your Migration: If migration has heavy SQL and stored procedures, prioritize automated tools such as SnowConvert. For full data movement, AWS-native environments use AWS SCT and AWS DMS.

- Align with Cloud Strategy: If you have a simple setup and no engineering team uses Fivetran. For Snowflake-centric architectures, Matillion and dbt integrate seamlessly.

- Team Expertise: If your team needs SQL-focused teams, use SnowConvert, and ETL developers use Informatica, Talend, and Matillion.

- Large-scale migrations with complex transformations require enterprise-grade tools with scalability and monitoring capabilities.

Teradata to Snowflake Migration Challenges (and How to Solve Them)

Teradata to Snowflake migration is like a high-stakes puzzle that needs to be handled properly. The common Teradata to Snowflake migration challenges teams face, and the solutions to overcome them, are

BTEQ Script Conversion

Teradata’s Basic Teradata Query (BTEQ) scripts often contain complex logic. These scripts include Teradata-specific commands, control flow, and error handling that are not compatible with Snowflake. To mitigate these, rewrite BTEQ scripts using Snowflake SQL, SnowSQL, or orchestration tools. Test the scripts to ensure functional parity.

Primary Index (PI) and Partitioning

Teradata relies on the Primary Index to physically distribute data across AMPs. Snowflake does not have indexes; it uses Micro-partitioning. To solve this problem, identify high-cardinality join columns and define Snowflake clustering keys. Run EXPLAIN on key queries in Snowflake to validate scan efficiency.

Silent Data Type Mismatches

Teradata DATE is stored as an INTEGER internally. PERIOD and INTERVAL types have no Snowflake equivalent. These don't throw errors; they silently corrupt data. To solve this, validate every column with column-level checksums, not just row counts. Pay special attention to DATE, TIMESTAMP, PERIOD, and INTERVAL columns.

Large Data Volume Migration

Moving a large volume of data can take a long time. Big-bang migrations above 10TB are impractical without optimization. To solve this, use Teradata Parallel Transporter (TPT) for high-speed parallel export. Combine with phased migration — migrate by subject area or data mart, not all at once.

ETL Pipeline Redesign

Teradata favors a heavy ETL approach where data is transformed before hitting the database. To mitigate this, re-architect pipelines using dbt for transformation logic inside Snowflake. Use Fivetran or Airbyte for ingestion. This is also an opportunity to simplify and modernize.

Teradata to Snowflake Migration Best Practices

Following best practices can greatly lessen risks and lead to a smoother switch from Teradata to Snowflake.

Thorough Planning and Review

- Before you start, do a full review of your Teradata system. This includes schemas, data amounts, connections, and data jobs.

- Clearly define the project's scope, goals, and measures of success. Develop a detailed moving plan with schedules, people, and ways to handle risks.

Use Automation for Schema and Code Changes

- Use automated tools to change Teradata data definitions (schemas, tables) and SQL code (macros, queries) to Snowflake-friendly formats.

- While these tools might not convert everything perfectly, they greatly cut down on manual work. Plan to have people review and fix the converted code.

Use a Phased or Step-by-Step Approach

- Avoid trying to move everything at once, especially for big and complex systems. Instead, move in stages, perhaps by topic or department.

- Start with a test project to check your tools and processes. This will give you early successes and lessons.

Prioritize Data Checking and Testing

- Put strong data checks in place at each stage of the move. This is to confirm data correctness and completeness.

- Run thorough performance tests of important queries in Snowflake. Compare the results against how they ran in Teradata.

Redesign and Adjust for Snowflake

- Don't just copy everything as-is. Use this as a chance to redesign data models and data processes. This will help you use Snowflake's unique features, like its ability to handle semi-structured data, use clustering keys, and separate computing from storage. Adjust queries so they run well on Snowflake's engine.

Proper User Training and Change Management

- Invest in good training for all user groups on Snowflake's features, SQL style, and best practices for query writing and cost control. Have a plan to manage the change, listen to user worries, and help people adopt the new platform.

Engage Experts or Use Specialized Tools

- For complex moves, think about working with consultants who have deep knowledge of both Teradata and Snowflake. You can also use special third-party moving platforms. Their experience can help you with difficult challenges, speed up the move, and lower risks.

Build Security and Governance from Day One

- Set up a strong security model in Snowflake, mapping roles and permissions carefully from Teradata. Set up network rules, encryption, and access controls.

- Establish data governance practices for the cloud, dealing with data quality, history, and rule-following.

Plan for Ongoing Improvements and Cost Management

- Set up continuous monitoring of Snowflake's performance and credit use.

- Regularly review query performance, warehouse usage, and storage costs. Put improvement methods and cost controls in place to get long-term value from your Snowflake setup.

Teradata to Snowflake Migration Checklist

Use the Teradata to Snowflake migration checklist below to track progress across all migration phases:

Pre-Migration

Before starting with the Teradata to Snowflake migration, one needs to focus on assessment and planning.

- [ ] Inventory all Teradata databases, tables, schemas, views, macros, stored procedures, and BTEQ scripts.

- [ ] Identify business-critical workloads and dependencies.

- [ ] Map all downstream consumers (ETL jobs, BI reports, APIs, applications).

- [ ] Categorize objects: Migrate / Modernize / Retire.

- [ ] Assess data volumes per table and total environment size.

- [ ] Select migration strategy (lift-and-shift / re-architecture / hybrid).

- [ ] Choose migration tools (SnowConvert + data pipeline tool).

- [ ] Provision Snowflake environment (account, warehouses, RBAC).

- [ ] Set up cloud storage staging area (S3 / Azure Blob / GCS).

During Migration

- [ ] Convert all DDL using the data type mapping table.

- [ ] Set up Snowflake environment (roles, warehouses, databases).

- [ ] Run SnowConvert on all BTEQ scripts and macros.

- [ ] Manually validate all converted scripts against Teradata output.

- [ ] Export historical data using Teradata TPT.

- [ ] Stage data files to cloud storage.

- [ ] Load historical data into Snowflake using COPY INTO.

- [ ] Redesign ETL pipelines for Snowflake ELT architecture.

- [ ] Reconnect BI tools (Tableau, MicroStrategy, Power BI) to Snowflake.

- [ ] Define clustering keys for high-cardinality tables.

- [ ] Maintain a parallel run (Teradata + Snowflake) if required.

Validation

- [ ] Do a row count check on every table.

- [ ] Column-level checksum — DATE, TIMESTAMP, DECIMAL columns, especially.

- [ ] Business report reconciliation — compare key metrics between Teradata and Snowflake.

- [ ] Regression test all downstream applications.

- [ ] Load and query performance benchmarks in Snowflake.

Post-Migration

- [ ] Monitor Snowflake for 48–72 hours post-cutover.

- [ ] Set up resource monitors and warehouse auto-suspend.

- [ ] Decommission Teradata after confirmed stability.

- [ ] Document Snowflake architecture for ongoing maintenance.

Why Migrate from Teradata to Snowflake?

Snowflake is a data warehouse built for the cloud. It has several key benefits over older systems like Teradata. This makes it a popular choice for many businesses that want modern tools, speed, and advanced analytics.

Snowflake was built from the ground up for the cloud. Its system separates computing power from data storage. This setup makes it possible to scale resources up or down almost instantly and independently.

This helps control costs. In contrast, Teradata's on-site hardware is often fixed and needs a lot of planning to expand.

- Performance and Multiple Users: Snowflake's unique design lets different groups and tasks run on their own separate computer clusters, called Virtual Warehouses. This means they don't fight for the same resources, even when using the same data. This greatly improves performance when many queries run at once. With Teradata, different tasks competing for resources can be a problem.

- Flexible Data Support and Connections: Snowflake is built to handle both structured and semi-structured data (like JSON, Avro, XML, and Parquet). You don't need complex processes to load this data. It also has a growing number of connectors that work with popular business intelligence, data loading, and data science tools. These connections often work more smoothly than with older systems.

- Secure Data Sharing and Teamwork: Snowflake's Secure Data Sharing feature makes it possible to share live, controlled data with other Snowflake accounts. This can be done with internal or external users without copying or migrating the data. This is a major new feature for teamwork, selling data, and department-to-department analytics. It is much simpler than old data-sharing methods.

- Cost-Effectiveness and Pricing Model: Snowflake’s pay-as-you-go model means you only pay for the storage and computing power you use. This often leads to better cost efficiency, especially for changing workloads. Teradata usually involves a large upfront payment for hardware and software, plus ongoing upkeep costs. While moving to the cloud requires careful cost management, Snowflake's model gives greater detail and control.

- Simpler Management and Upkeep: As a fully managed service, Snowflake handles most administrative work, like software updates and system management. This lightens the workload for IT teams when compared to managing on-premise Teradata systems. Teradata requires dedicated database administrators for tuning, upkeep, and upgrades.

- Ecosystem and New Features: As a modern cloud platform, Snowflake gets fast new updates and has a growing community. It regularly releases new features and improvements, keeping up with the changing world of data.

Work With Entrans to Migrate from Teradata to Snowflake

Experienced professionals from Entrans can help with the review, planning, schema, and code changes, data moving, and performance tuning. They can also assist with user training, helping you use the full power of Snowflake well.

A team of data engineers and experts brings a lot of experience from many migration projects. This helps you avoid common problems and reach your business goals faster. We take care of the end-to-end Teradata to Snowflake migration, starting from audit to go live. Apart from that, we take care of post-migration activities such as cost governance and performance optimization.

Want to know more about how expert services can simplify your cloud data warehouse move? Book a Free Teradata to Snowflake Migration Assessment.